Games

Mathematical game theory, a branch of economics, views any multi agent environment as a game, provided that the impact of each agent on the others is "significant," regardless of whether the agents are cooperative or competitive. 1 In AI, the most common games are of a rather specialized kind-what game theorists call deterministic, turn-taking, two-player, zero-sum games of perfect information (such as chess). In our terminology, this means deterministic, fully observable envirorunents in which two agents act alternately and in which the utility values at the end of the game are always equal and opposite. For example, if one player wins a game of chess, the other player necessarily loses. It is this opposition between the agents' utility functions that makes the situation adversarial.

Games have engaged the intellectual faculties of humans-sometimes to an alanning degree-for as long as civilization has existed. For AI researchers, the abstract nature of games makes them an appealing subject for study. The state of a game is easy to represent, and agents are usually restricted to a small nwnber of actions whose outcomes are defined by precise rules. Physical games, such as croquet and ice hockey, have much more complicated descriptions, a much larger range of possible actions, and rather imprecise rules defining the legality of actions. With the exception of robot soccer, these physical games have not attracted much interest in the AI community.

Games, tmlike most of the toy problems studied in Chapter 3, are interesting because they are too hard to solve. For example, chess has an average branching factor of about 35, and games often go to 50 moves by each player, so the search tree has about 35100 or 10154 nodes (although the search graph has "only" about 1 040 distinct nodes). Games, like the real world, therefore require the ability to make some decision even when calculating the optimal decision is infeasible. Games also penalize inefficiency severely. Whereas an implementation of A* search that is half as efficient will simply take twice as long to run to completion, a chess program that is half as efficient in using its available time probably will be beaten into the ground, other things being equal. Game-playing research has therefore spawned a number of interesting ideas on how to make the best possible use of time.

We begin with a definition of the optimal move and an algorithm for finding it. We then look at techniques for choosing a good move when time is limited. Pruning allows us to ignore p011ions of the search tree that make no difference to the final choice, and heuristic evaluation functions allow us to approximate the true utility of a state without doing a complete search. Section 5.5 discusses games such as backgammon that include an element of chance; we also discuss bridge, which includes elements of imperfect infonnation because not all cards are visible to each player. Finally, we look at how state-of-the-art game-playing programs fare against human opposition and at directions for future developments.

We first consider games with two players, whom we call MAX and MIN for reasons that will soon become obvious. MAX moves first, and then they take tums moving until the game is over. At the end of the game, points are awarded to the winning player and penalties are given to the loser. A game can be fonnally defined as a kind of search problem with the following elements:

- So: The lnlttal state, which specifies how the game is set up at the start.

- PLAYER(8): Defines which player has the move in a state.

- ACTIONS(8): Retums the set of legal moves in a state.

- RESULT(8, a): The transition model, which defines the result of a move.

- TERMINAL-TEST ( 8): A terminal test, which is true when the game is over and false otherwise. States where the game has ended are called tenninal states.

- UTILITY(8,p): A utility function (also called an objective function or payoff fw1ction), defines the final numeric value for a game that ends in terminal state 8 for a player p. In chess, the outcome is a win, loss, or draw, with values 1, 0, or 1/2· Some games have a wider variety of possible outcomes; the payoffs in backgammon range from 0 to 192. A zero-sum game is (confusingly) defined as one where the total payoff to all players is the same for every instance of the game. Chess is zero-sum because every game has payoff of either 0 1 , 1 0 or 1/2 1/2· "Constant-sum" would have been a better term, but zero-sum is traditional and makes sense if you imagine each player is charged an entry fee of 1/2.

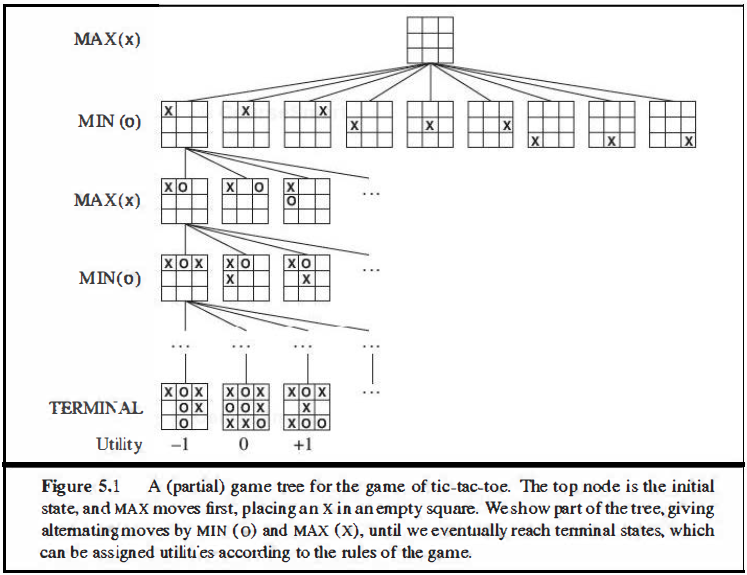

The initial state, ACTIONS function, and RESULT function define the game tree for the game-a tree where the nodes are game states and the edges are moves. Figure 5.1 shows part of the game tree for tic-tac-toe (noughts and crosses). From the initial state, MAX has nine possible moves. Play altemates between MAX's placing an X and MIN's placing an 0 until we reach leaf nodes corresponding to terminal states such that one player has three in a row or all the squares are filled. The number on each leaf node indicates the utility value of the terminal state from the point of view of MAX; high values are assumed to be good for MAX and bad for MIN (which is how the players get their names).

For tic-tac-toe the game tree is relatively small-fewer than 9! = 362, 880 terminal nodes. But for chess there are over 1040 nodes, so the game tree is best thought of as a theoretical construct that we cannot realize in the physical world. But regardless of the size of the game tree, it is MAX's job to search for a good move. We use the term search tree for a tree that is superimposed on the full game tree, and examines enough nodes to allow a player to detennine what move to make.