Approximation Algorithms

Approximation Algorithms

Many problems of practical significance are NP-complete but are too important to abandon merely because obtaining an optimal solution is intractable. If a problem is NP-complete, we are unlikely to find a polynomial-time algorithm for solving it exactly, but even so, there may be hope. There are at least three approaches to getting around NP-completeness. First, if the actual inputs are small, an algorithm with exponential running time may be perfectly satisfactory. Second, we may be able to isolate important special cases that are solvable in polynomial time. Third, it may still be possible to find near-optimal solutions in polynomial time (either in the worst case or on average). In practice, near-optimality is often good enough. An algorithm that returns near-optimal solutions is called an approximation algorithm. This chapter presents polynomial-time approximation algorithms for several NP-complete problems.

Performance ratios for approximation algorithms

Suppose that we are working on an optimization problem in which each potential solution has a positive cost, and we wish to find a near-optimal solution. Depending on the problem, an optimal solution may be defined as one with maximum possible cost or one with minimum possible cost; that is, the problem may be either a maximization or a minimization problem.

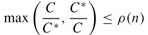

We say that an algorithm for a problem has an approximation ratio of ρ(n) if, for any input of size n, the cost C of the solution produced by the algorithm is within a factor of ρ(n) of the cost C* of an optimal solution:

| (35.1) |

|

We also call an algorithm that achieves an approximation ratio of ρ(n) a ρ (n) approximation algorithm. The definitions of approximation ratio and of ρ (n)-approximation algorithm apply for both minimization and maximization problems. For a maximization problem, 0 < C ≤ C*, and the ratio C*/C gives the factor by which the cost of an optimal solution is larger than the cost of the approximate solution. Similarly, for a minimization problem, 0 < C* ≤ C, and the ratio C/C* gives the factor by which the cost of the approximate solution is larger than the cost of an optimal solution. Since all solutions are assumed to have positive cost, these ratios are always well defined. The approximation ratio of an approximation algorithm is never less than 1, since C/C* 003C; 1 implies C*/C > 1. Therefore, a 1-approximation algorithm produces an optimal solution, and an approximation algorithm with a large approximation ratio may return a solution that is much worse than optimal.

For many problems, polynomial-time approximation algorithms with small constant approximation ratios have been developed, while for other problems, the best known polynomial-time approximation algorithms have approximation ratios that grow as functions of the input size n. An example of such a problem is the set-cover problem presented in set-covering problem.

Some NP-complete problems allow polynomial-time approximation algorithms that can achieve increasingly smaller approximation ratios by using more and more computation time. That is, there is a trade-off between computation time and the quality of the approximation. An example is the subset-sum problem studied in set-covering problem. This situation is important enough to deserve a name of its own.

An approximation scheme for an optimization problem is an approximation algorithm that takes as input not only an instance of the problem, but also a value ∈ > 0 such that for any fixed ∈, the scheme is a (1 ∈)-approximation algorithm. We say that an approximation scheme is a polynomial-time approximation scheme if for any fixed ∈ > 0, the scheme runs in time polynomial in the size n of its input instance.

The running time of a polynomial-time approximation scheme can increase very rapidly as ∈ decreases. For example, the running time of a polynomial-time approximation scheme might be O(n2/∈). Ideally, if ∈ decreases by a constant factor, the running time to achieve the desired approximation should not increase by more than a constant factor. In other words, we would like the running time to be polynomial in 1/∈ as well as in n.

We say that an approximation scheme is a fully polynomial-time approximation scheme if it is an approximation scheme and its running time is polynomial both in 1/∈ and in the size n of the input instance. For example, the scheme might have a running time of O((1/∈)2n3). With such a scheme, any constant-factor decrease in ∈ can be achieved with a corresponding constant-factor increase in the running time.

When the approximation ratio is independent of n, we will use the terms approximation ratio of ρ and ρ-approximation algorithm, indicating no dependence on n.