Hopfield Networks

Introduction: -Hopfield network is another example of supervised learning.

Hopfield networks are inspired by particles in a magnetic field:-The idea for the Hopfield networks originated from the behavior of particles in a magnetic field: Every particle "communicates"(by means of magnetic forces) with every other particle (completely linked) with each particle trying to reach an energetically favorable state (i.e. a minimum of the energy function). As for the neurons this state is known as activation. Thus, all particles or neurons rotate and there by encourage each other to continue this rotation. As a manner of speaking, our neural network is a cloud of particles Based on the fact that the particles automatically detect the minima of the energy function; Hopfield had the idea to use the “spin" of the particles to process information.

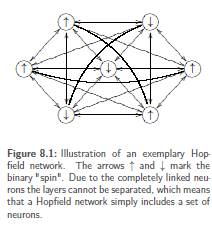

In a Hopfield network, all neurons influence each other symmetrically: -Hopfield network consists of a set K of completely linked neurons with binary activation use two spins), with the weights being symmetric between the individual and without any neuron being directly connected to itself. Thus, the state of |K| neurons with two possible states ϵ{−1, 1} can be described by a string x ϵ {−1, 1}|K|.

In a Hopfield network, all neurons influence each other symmetrically: -Hopfield network consists of a set K of completely linked neurons with binary activation use two spins), with the weights being symmetric between the individual and without any neuron being directly connected to itself. Thus, the state of |K| neurons with two possible states ϵ{−1, 1} can be described by a string x ϵ {−1, 1}|K|.

The complete link provides a full square matrix of weights between the neurons. Furthermore, we will soon recognize according to which rules the neurons are spinning, i.e. are changing their state.

Additionally, the complete link leads to the fact that we do not know any input, output or hidden neurons. Thus, we have to think about how we can input something into the |K| neurons.

A Hopfield network consists of a set K of completely linked neurons without direct recurrences. The activation function of the neurons is the binary threshold function with outputs ϵ {1,−1}.

The state of the network consists of the activation states of all neurons. Thus, the state of the network can be understood as a binary string zϵ {−1, 1}|K|.

Input and output of a Hopfield network are represented by neuron states:-A set of |K| particles that is in a state is automatically looking for a minimum. An input pattern of a Hopfield network is exactly such a state: A binary string x ϵ{−1, 1}|K| that initializes the neurons. Then the network is looking for the minimum to be taken (which we have previously defined by the input of training samples) on its energy surface. But when do we know that the minimum has been found? This is simple, too: when the network stops .A Hopfield network with a symmetric weight matrix that has zeros on its diagonal always converges .Then the output is a binary string yϵ{−1, 1}|K|, namely the state string of the network that has found a minimum.

Input and output of a Hopfield network:-The input of a Hopfield network is binary string xϵ{−1, 1}|K| that initializes the state of the network. After the convergence of the network, the output is the binary string y ϵ{−1, 1}|K| generated from the new network state.

Significance of weights:-The weights are capable to control the complete change of the network. The weights can be positive, negative, or 0.For a weight wi,j between two neurons i and j the following holds:

- If wi,j is positive,it will try to force the two neurons to become equal – the larger they are, the harder the network will try. If the neuron i is in state 1 and the neuron j is in state −1, a high positive weight will advise the two neurons that it is energetically more favorable to be equal.

- If wi,j is negative, its behavior will be analogous only that i and j are urged to be different. A neuron i instate −1 would try to urge a neuron j into state 1.

- Zero weights lead to the two involved neurons not influencing each other. The weights as a whole apparently take the way from the current state of the network towards the next minimum of the energy function.